About: beaks.live – the software

This is the bird box that is shown at beaks.live. It is on the side of a house in Cambourne, about 8 miles west of Cambridge, in the UK.

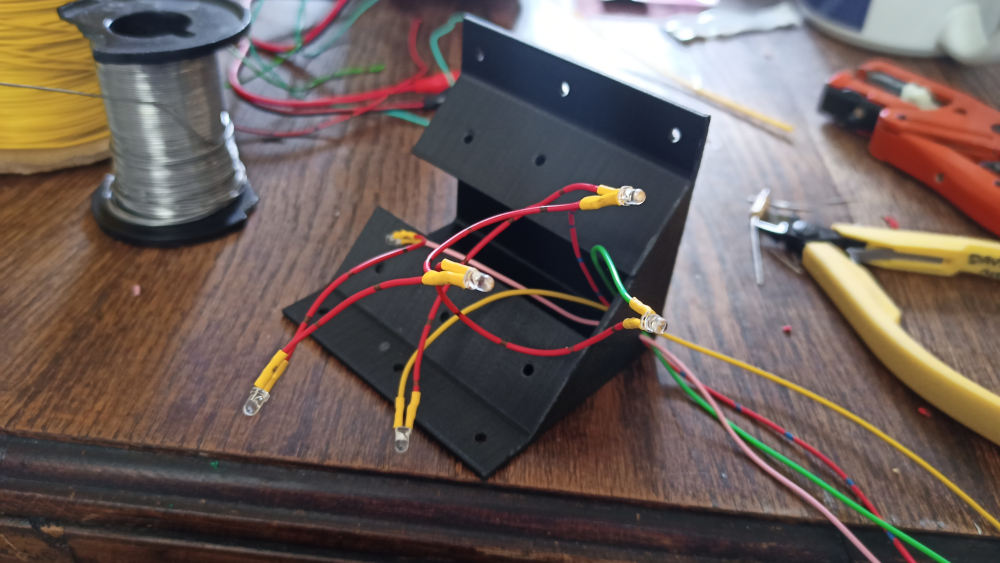

Right from the start, the plan was to get it working roughly and quickly and then improve it until it was the best I could do with the crap hardware – this being a £11 webcam connected via USB to a Raspberry Pi 4, which also drives transistors to work the cheapest infra-red LEDs I could find.

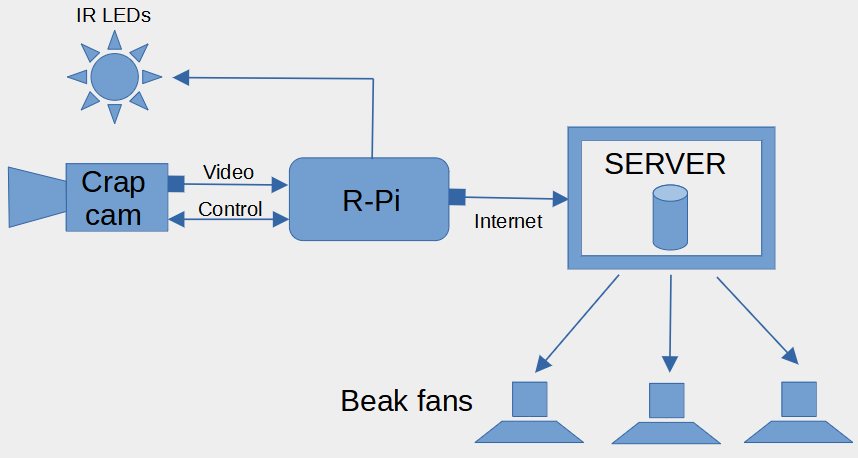

Having messed around with RTMP (no one uses it any more) and HLS (I’ll be fucked if I can get it to work) for streaming, I eventually ended up with this system:

The Raspberry Pi takes care of the camera and lighting, uploading the video to the server (a VDS hosted with Mythic Beasts), which does all the heavy lifting of looking for motion and streaming live footage to the many dozens of viewers who are eager to catch a glimpse of beak.

Did I mention the camera is crap? The automatic exposure sets itself to some random level and occasionally flashes up and down twice a second, apparently to relieve the boredom. So the R-Pi has to sort out the exposure, and luckily, you can set most of the camera settings manually via USB. Every 10 minutes the Pi records 5 seconds of video, takes 5 frames and averages the light level on each of them. It then sets the exposure, gamma, and LED levels* depending on whether it needs to be lighter or darker. Or it just leaves things as they are if it’s all hunky dory.

* the LEDs are so dim I just leave them all on all the time now.

It records 5 minutes of video at a time, using FFmpeg (with some video tweaking and normalisation to make the crap camera’s video a bit nicer), which is then uploaded to the server. Funny story – I originally set up the Pi’s exposure setting software so it calculated the camera’s exposure settings from this video – this video which has been normalised. So whatever is coming out of the camera, FFmpeg “fixes” it, and then exposure setting software thinks everything is hunky dory, despite the exposure being so wrong the video is just noise. This is why it records 5 seconds of unfixed video separately to check the exposure. A couple of months later I had forgotten this, and had the brilliant idea of using samples from the 5 minute feed rather than doing a separate 5 second one. I thought the camera had died, until I remembered the normalisation and why I didn’t do it like that originally. I look forward to doing the same thing again in July, September, November, etc.

Incidentally, all this software is written in a mixture of Python and Bash scripting because I am a masochistic lunatic. I love Bash – it’s just mad, with random shit like functions looking like “function my_function () { …” where the ()’s do nothing because you can’t put anything inside them – they are purely decorative.

But I digress. The server has the latest video uploaded to it. It keeps the last 4 uploads so there is 20 minutes of buffer. It deletes the oldest one once it has been processed for motion detection. There is a watchdog timer on the server and the Pi will only upload a video if it’s been updated recently enough. This is to stop the server being filled up with files if it reboots and the processing stops or something. Each 5 minutes is about 100MB.

The motion detection is done with DVR-Scan and hits are processed to generate thumbnails and a static web page. Anything less than 30 seconds long is discarded to get rid of most of the dross. Videos older than 25 hours are deleted so there’s a rolling list of videos.

The live page is also static and uses video.js for the player. The current 5 minute chunk location is obtained using an XMLHttpRequest, then the video loaded with JS. When it gets to the end, the JS gets the next section and plays it with a minor blip for the viewer.

The “live” video is actually always 10-15 minutes in the past because it takes 5 minutes to record a chunk before it’s uploaded and then the server tells the player to play the previously uploaded one so you don’t start watching one that’s still uploading.

It’s a bit like the HLS streaming system, except there’s hideous latency and mine works. If you want to mess it up, right click and choose “show all controls” and then slide the slider to the end. I’ve no idea why I’ve told you that.